Run sample applications

RUBIK Pi 3 Ubuntu 24.04 includes various sample applications. For details, see the Artificial Intelligence, Camera Software, and Robot Development chapters.

You can run these applications:

-

Running the application may result in high computational load. For a better experience, please install a fan.

-

To run the multimedia and AI applications, set up the Wi-Fi connection and establish an SSH connection.

-

To view the display output, connect an HDMI display to the HDMI port of RUBIK Pi 3 by referring to Connect an HDMI display.

-

To enable audio, please refer to the Audio section.

Prerequisites for running sample applications

Before running the sample applications, enable the Weston display to activate the full functionality of the camera and AI capabilities. The steps are as follows:

-

Update and install the dependencies.

sudo apt update && sudo apt upgrade -

Install Weston and test the basic display functionality.

- Install Weston and the related software packages.

sudo apt install weston-autostart gstreamer1.0-qcom-sample-apps gstreamer1.0-tools qcom-fastcv-binaries-dev qcom-video-firmware weston-autostart libgbm-msm1 qcom-adreno1

sudo reboot- Set up the display environment as the root user.

sudo -i

export XDG_RUNTIME_DIR=/run/user/$(id -u ubuntu) -

Connect the HDMI display and briefly wait for the Weston desktop to be displayed on the screen.

noteIf the Weston desktop is not displayed properly, try entering the

sudo dpkg-reconfigure weston-autostartcommand in the RUBIK Pi terminal. -

To test the graphics, run the sample application. The following example runs the Weston-simple-egl:

weston-simple-egl

Run multimedia sample applications

The multimedia sample applications show various use cases for the camera, display, and video streaming capabilities of RUBIK Pi.

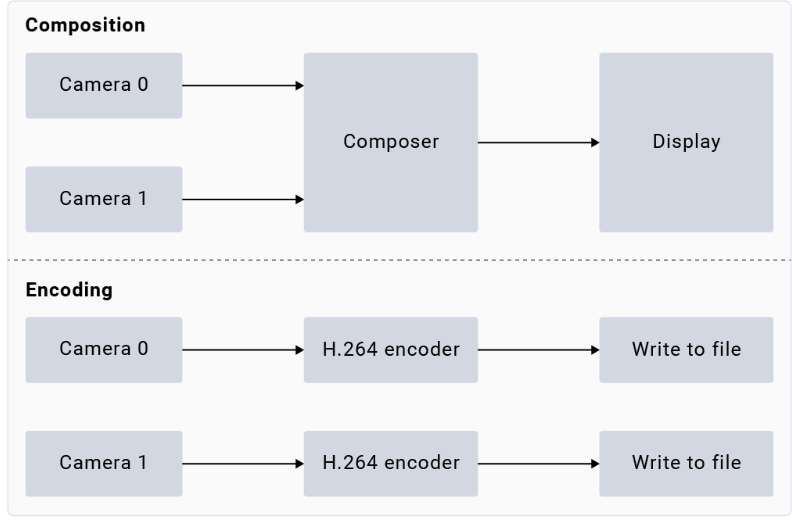

- Multi-camera streaming or encoding (dashcam)

- Multichannel video decode and compose (Video wall)

The gst-multi-camera-example command-line application demonstrates simultaneous streaming from two camera sensors on RUBIK Pi 3. This application composites the video streams side by side and displays them on a monitor, or encodes the video streams and saves them to a file.

Example

Before running the application, make sure the Weston display is enabled. To launch the application, run the following use case from the SSH terminal:

-

Install the camera-related software.

-

Add the RUBIK Pi PPA to Ubuntu sources and update the package list:

sudo sed -i '$a deb http://apt.thundercomm.com/rubik-pi-3/noble ppa main' /etc/apt/sources.list

sudo apt update -

Install the camera software.

sudo apt install -y qcom-ib2c qcom-camera-server qcom-camx

sudo apt install -y rubikpi3-cameras

sudo chmod -R 755 /opt

sudo mkdir -p /var/cache/camera/

sudo touch /var/cache/camera/camxoverridesettings.txt

sudo sh -c 'echo enableNCSService=FALSE >> /var/cache/camera/camxoverridesettings.txt'

-

-

To view the sample application on the HDMI display, run the following export command:

export XDG_RUNTIME_DIR=/run/user/$(id -u ubuntu)/ && export WAYLAND_DISPLAY=wayland-1noteIf Weston is not automatically enabled, start two secure shell instances - one to enable Weston, and another to run the application.

-

To enable Weston, run the following command in the first shell:

export GBM_BACKEND=msm && export XDG_RUNTIME_DIR=/run/user/$(id -u ubuntu)/ && mkdir -p $XDG_RUNTIME_DIR && weston --continue-without-input --idle-time=0 -

To set up the Wayland Display environment, run the following command in the second shell:

export XDG_RUNTIME_DIR=/run/user/$(id -u ubuntu)/ && export WAYLAND_DISPLAY=wayland-1

-

-

To view the

waylandsinkoutput, run the following command:gst-multi-camera-example -o 0 -

To store the encoder output, follow these steps:

-

Run the following command:

gst-multi-camera-example -o 1The device will store the encoded files in

/opt/cam1_vid.mp4and/opt/cam2_vid.mp4, for camera 1 and camera 2 respectively. -

Run the following command to extract the files from the host:

scp ubuntu@<IP address of target device>:/opt/cam1_vid.mp4 <destination directory> -

To play the encoder output, use any media player that supports MP4 files.

-

-

To stop the use case, press Ctrl + C.

-

To display the available help options, run the following command:

gst-multi-camera-example --help -

The

GST_DEBUGenvironment variable controls the GStreamer debug output. Set the desired level to allow logging. For example, to log all warnings, run the following command:export GST_DEBUG=2

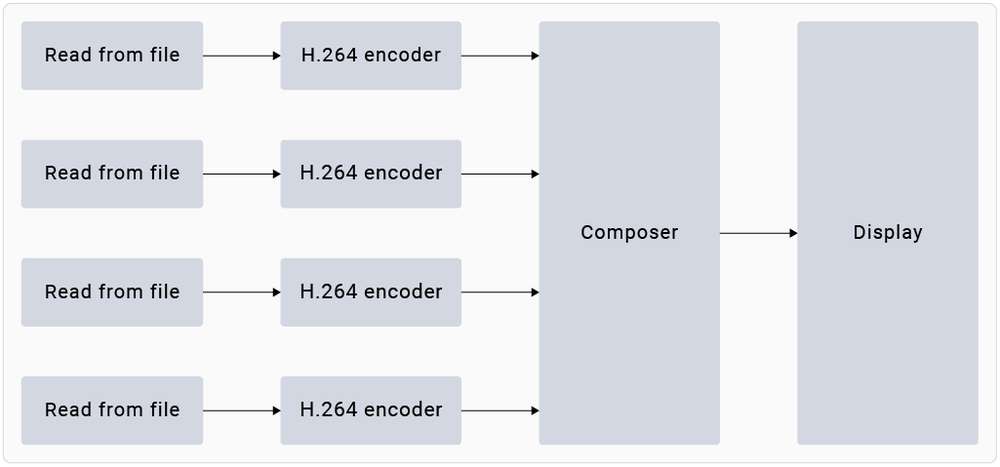

The gst-concurrent-videoplay-composition command-line application allows concurrent video decoding and playback for AVC-coded videos and composes them on a display for video wall applications. The application requires at least one input video file, which should be an MP4 file with the AVC codec.

Before running the application, make sure the Weston display is enabled. To launch the application, run the following use case from the SSH terminal:

-

Install the camera-related software.

-

Add the RUBIK Pi PPA to Ubuntu sources and update the package list:

sudo sed -i '$a deb http://apt.thundercomm.com/rubik-pi-3/noble ppa main' /etc/apt/sources.list

sudo apt update -

Install the camera software.

sudo apt install -y qcom-ib2c qcom-camera-server qcom-camx

sudo apt install -y rubikpi3-cameras

sudo chmod -R 755 /opt

sudo mkdir -p /var/cache/camera/

sudo touch /var/cache/camera/camxoverridesettings.txt

sudo sh -c 'echo enableNCSService=FALSE >> /var/cache/camera/camxoverridesettings.txt'

-

-

To transfer prerecorded or test videos that are in the AVC-encoded MP4 (H.264) format (with the filename as

<file_name>) to your device, run the following command on the host computer:scp <file_name> ubuntu@[DEVICE IP-ADDR]:/opt/ -

To view the sample application on the HDMI display, run the following export command from the SSH terminal:

export XDG_RUNTIME_DIR=/run/user/$(id -u ubuntu)/ && export WAYLAND_DISPLAY=wayland-1

If Weston is not automatically enabled, start two secure shell instances - one to enable Weston, and another to run the application.

-

To enable Weston, run the following command in the first shell:

export GBM_BACKEND=msm && export XDG_RUNTIME_DIR=/run/user/$(id -u ubuntu)/ && mkdir -p $XDG_RUNTIME_DIR && weston --continue-without-input --idle-time=0 -

To set up the Wayland Display environment, run the following command in the second shell:

export XDG_RUNTIME_DIR=/run/user/$(id -u ubuntu)/ && export WAYLAND_DISPLAY=wayland-1

- To start concurrent playback for four channels, run the following command:

gst-concurrent-videoplay-composition -c 4 -i /opt/<file_name1>.mp4 -i /opt/<file_name2>.mp4 -i /opt/<file_name3>.mp4 -i /opt/<file_name4>.mp4

-

-c: specifies the number of streams to be decoded for composition can be either 2, 4, or 8. -

-i: specifies the absolute path to the input video file.

-

To stop the use case, press Ctrl + C.

-

To display the available help options, run the following command:

gst-concurrent-videoplay-composition --help

- The

GST_DEBUGenvironment variable controls the GStreamer debug output. Set the required level to allow logging. For example, to log all warnings, run the following command:

export GST_DEBUG=2

Run AI sample applications

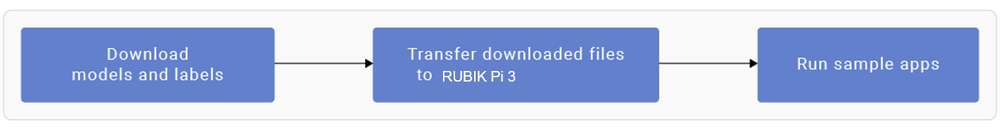

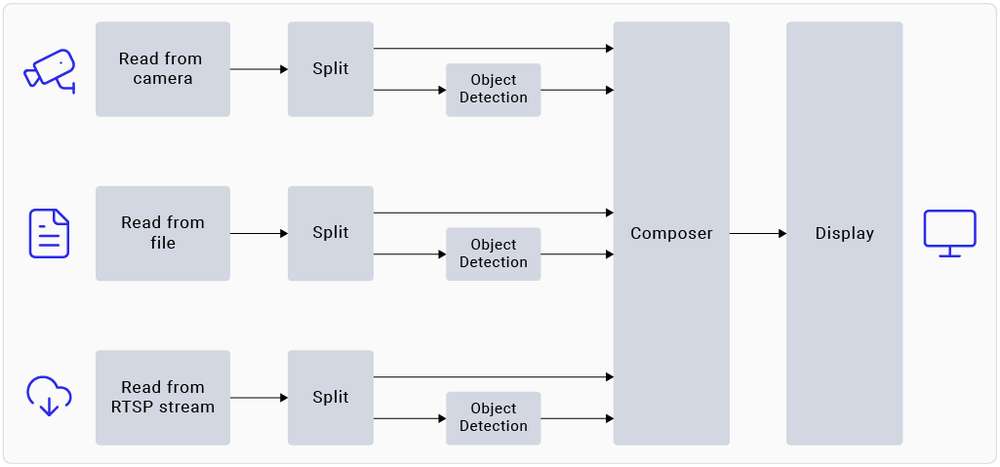

AI sample applications perform use cases for object detection and parallel inferencing on camera, video file, or Real-Time Streaming Protocol (RTSP) input streams on the RUBIK Pi 3 device. The procedure involves downloading the models from Qualcomm® AI Hub and labels from GitHub, transferring them to RUBIK Pi 3, and running the sample applications.

Prerequisites

The device requires models and label files to run the AI sample applications.

Procedure

Before running the application, make sure the Weston display is enabled. To launch the application, run the following use case from the SSH terminal:

-

Install the camera-related software.

-

Add the RUBIK Pi PPA to Ubuntu sources and update the package list:

sudo sed -i '$a deb http://apt.thundercomm.com/rubik-pi-3/noble ppa main' /etc/apt/sources.list

sudo apt update -

Install the camera software.

sudo apt install -y qcom-ib2c qcom-camera-server qcom-camx

sudo apt install -y rubikpi3-cameras

sudo chmod -R 755 /opt

sudo mkdir -p /var/cache/camera/

sudo touch /var/cache/camera/camxoverridesettings.txt

sudo sh -c 'echo enableNCSService=FALSE >> /var/cache/camera/camxoverridesettings.txt'

-

-

You need the following models for the AI sample applications:

Sample Application Required Model Required label file AI object detection yolov8_det_quantized.tflite yolonas.labels Parallel AI inference yolov8_det_quantized.tflite yolov8.labels Parallel AI inference inception_v3_quantized.tflite classification.labels Parallel AI inference hrnet_pose_quantized.tflite hrnet_pose.labels Parallel AI inference deeplabv3_plus_mobilenet_quantized.tflite deeplabv3_resnet50.labels -

Download and run the automated script to get the model and label files on the device:

curl -L -O https://raw.githubusercontent.com/quic/sample-apps-for-qualcomm-linux/refs/heads/main/download_artifacts.shchmod +x download_artifacts.sh./download_artifacts.sh -v GA1.4-rel -c QCS6490

The YOLOv8 models are not part of the script. You need to export these models using the Qualcomm AI Hub APIs.

-

Export YOLOv8 from Qualcomm AI Hub.

Follow these validated instructions to export models on your host computer using Ubuntu 22.04. You can also run these instructions on Windows through Windows Subsystem for Linux (WSL) or set up an Ubuntu 22.04 virtual machine on macOS. For more details, see Virtual Machine Setup Guide.

-

Obtain the shell script for exporting the models:

wget https://raw.githubusercontent.com/quic/sample-apps-for-qualcomm-linux/refs/heads/main/scripts/export_model.sh -

Update the script permissions to make it executable:

chmod +x export_model.sh -

Run the export script with your Qualcomm AI Hub API token as the value for the --api-token argument:

./export_model.sh --api-token=<Your AI Hub API Token>

noteYou can find your Qualcomm AI Hub API token in your account settings.

-

The script downloads the models to the

builddirectory. Copy these models to the /etc/models/ directory of your device using the following commands:scp <working directory>/build/yolonas_quantized/yolonas_quantized.tflite ubuntu@<IP address of target device>:/etc/models/scp <working directory>/build/yolov8_det_quantized/yolov8_det_quantized.tflite ubuntu@<IP address of target device>:/etc/models/

-

-

Update the

q_offsetandq_scaleconstants of the quantized LiteRT model in the JSON file. For instructions, refer to Get the model constants. -

Use the following command to push the downloaded model files to the device:

scp <model filename> ubuntu@<IP addr of the target device>:/etc/models

Example

wget https://thundercomm.s3.dualstack.ap-northeast-1.amazonaws.com/uploads/web/rubik-pi-3/tools/rubikpi3_ai_sample_apps_models_labels.zip

unzip rubikpi3_ai_sample_apps_models_labels.zip

cd rubikpi3_ai_sample_apps_models_labels

scp inception_v3_quantized.tflite ubuntu@<IP addr of the target device>:/etc/models/

scp yolonas.labels ubuntu@<IP addr of the target device>:/etc/labels/

-

Create a directory for test videos using the following commands:

ssh ubuntu@<ip-addr of the target device>mount -o remount, rw /usrmkdir /etc/media/ -

From the host computer, push the test video files to the device:

scp <filename>.mp4 ubuntu@<IP address of target device>:/etc/media/

- AI object detection

- Parallel AI inference

The gst-ai-object-detection sample application demonstrates the hardware capability to detect objects on camera, video file, or RTSP input streams. The pipeline receives the input stream, preprocesses it, runs inferences on AI hardware, and displays the results on the screen.

Example

You must push the model and label files to the device to run the sample application. For details, see Procedure.

-

Begin a new SSH session and start the HDMI display monitor if you haven't already:

ssh ubuntu@<ip-addr of the target device> -

To view the sample application on the HDMI display, run the following export command from the SSH terminal:

export XDG_RUNTIME_DIR=/run/user/$(id -u ubuntu)/ && export WAYLAND_ DISPLAY=wayland-1

If Weston is not automatically enabled, start two secure shell instances - one to enable Weston, and another to run the application.

-

To enable Weston, run the following command in the first shell:

export GBM_BACKEND=msm && export XDG_RUNTIME_DIR=/run/user/$(id -u ubuntu)/ && mkdir -p $XDG_RUNTIME_DIR && weston --continue-without-input --idle-time=0 -

To set up the Wayland Display environment, run the following command in the second shell:

export XDG_RUNTIME_DIR=/run/user/$(id -u ubuntu)/ && export WAYLAND_DISPLAY=wayland-1

- Modify the

/etc/configs/config_detection.jsonfile on your device.

{

"file-path": "/etc/media/video.mp4",

"ml-framework": "tflite",

"yolo-model-type": "yolov8",

"model": "/etc/models/yolov8_det_quantized.tflite",

"labels": "/etc/labels/yolonas.labels",

"constants": "YOLOv8,q-offsets=<21.0, 0.0, 0.0>,q-scales=<3.0546178817749023, 0.003793874057009816, 1.0>;",

"threshold": 40,

"runtime": "dsp"

}

| Field | Values/description |

|---|---|

| ml-framework | |

| snpe | Uses the Qualcomm® Neural Processing SDK models |

| tflite | Uses the LiteRT models |

| qnn | Uses the Qualcomm® AI Engine direct models |

| yolo-model-type | |

| yolov5 yolov8 yolonas | Runs the YOLOv5, YOLOv8, and YOLO-NAS models, respectively. See Sample model and label files. |

| runtime | |

| cpu | Runs on the CPU |

| gpu | Runs on the GPU |

| dsp | Runs on the digital signal processor (DSP) |

| Input source | |

| camera | 0 – Primary camera 1 – Secondary camera |

| file-path | Directory path to the video file |

| rtsp-ip-port | Address of the RTSP stream in rtsp://<ip>:/<stream> format |

-

To start the application, run the following command:

gst-ai-object-detection

-

To stop the use case, press Ctrl + C.

-

To display the available help options, run the following command:

gst-ai-object-detection -h -

The

GST_DEBUGenvironment variable controls the GStreamer debug output. Set the required level to allow logging. For example, to log all warnings, run the following command:export GST_DEBUG=2

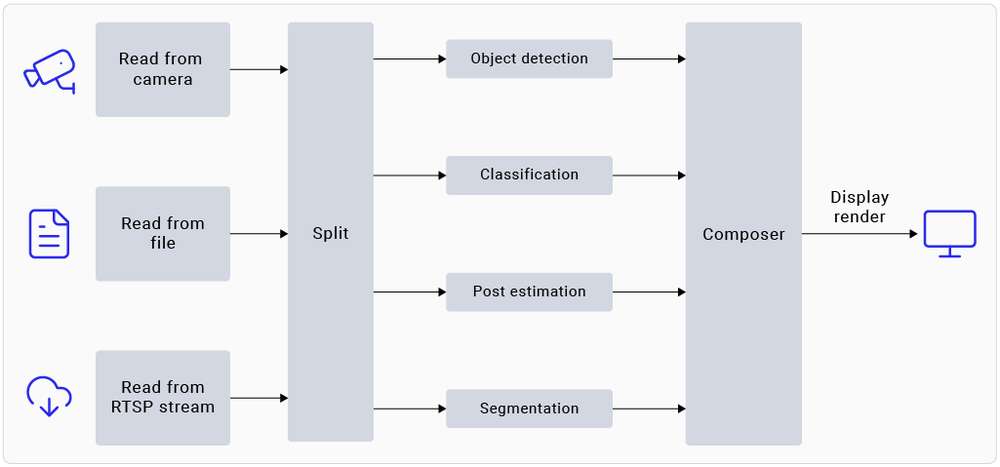

The gst-ai-parallel-inference command-line application demonstrates the hardware capability to perform four parallel AI inferences on camera, video file, or RTSP input streams. The pipeline detects objects, classifies objects, detects poses, and segments images on the input stream. The screen displays the results side-by-side.

Example

You must push the model and label files to the device to run the sample application. For details, see Procedure.

- Begin a new SSH session and start the HDMI display monitor if you haven't already:

ssh ubuntu@<ip-addr of the target device>

- To view the sample application on the HDMI display, run the following export command from the SSH terminal:

export XDG_RUNTIME_DIR=/run/user/$(id -u ubuntu)/ && export WAYLAND_ DISPLAY=wayland-1

If Weston is not automatically enabled, start two secure shell instances - one to enable Weston, and another to run the application.

-

To enable Weston, run the following command in the first shell:

export GBM_BACKEND=msm && export XDG_RUNTIME_DIR=/run/user/$(id -u ubuntu)/ && mkdir -p $XDG_RUNTIME_DIR && weston --continue-without-input --idle-time=0 -

To set up the Wayland Display environment, run the following command in the second shell:

export XDG_RUNTIME_DIR=/run/user/$(id -u ubuntu)/ && export WAYLAND_DISPLAY=wayland-1

- Run the following command to push the downloaded model file to the device:

scp <model filename> ubuntu@<IP addr of the target device>:/etc/models

Example

wget https://thundercomm.s3.dualstack.ap-northeast-1.amazonaws.com/uploads/web/rubik-pi-3/tools/rubikpi3_ai_sample_apps_models_labels.zip

unzip rubikpi3_ai_sample_apps_models_labels.zip

cd rubikpi3_ai_sample_apps_models_labels

scp yolov8_det_quantized.tflite ubuntu@<IP addr of the target device>:/etc/models/

scp yolov8.labels ubuntu@<IP addr of the target device>:/etc/labels/

scp inception_v3_quantized.tflite ubuntu@<IP addr of the target device>:/etc/models/

scp classification.labels ubuntu@<IP addr of the target device>:/etc/labels/

scp hrnet_pose_quantized.tflite ubuntu@<IP addr of the target device>:/etc/models/

scp hrnet_pose.labels ubuntu@<IP addr of the target device>:/etc/labels/

scp deeplabv3_plus_mobilenet_quantized.tflite ubuntu@<IP addr of the target device>:/etc/models/

scp deeplabv3_resnet50.labels ubuntu@<IP addr of the target device>:/etc/labels/

- To start the application, run the following command:

gst-ai-parallel-inference

-

To stop the use case, press Ctrl + C.

-

To display the available help options, run the following command:

gst-ai-parallel-inference -h

- Qualcomm AI Hub often updates models with the latest SDK versions. Using the wrong model constants may lead to inaccurate results. If you face such issues, update the model constants. Provide the model constants for the sample application using the following command:

gst-ai-parallel-inference -s /etc/media/video.mp4 \

--object-detection-constants="YOLOv8,q-offsets=<21.0, 0.0, 0.0>,q-scales=<3.0546178817749023, 0.003793874057009816, 1.0>;" \

--pose-detection-constants="Posenet,q-offsets=<8.0>,q-scales=<0.0040499246679246426>;" \

--segmentation-constants="deeplab,q-offsets=<0.0>,q-scales=<1.0>;" \

--classification-constants="Inceptionv3,q-offsets=<38.0>,q-scales=<0.17039915919303894>;"

- The

GST_DEBUGenvironment variable controls the GStreamer debug output. Set the required level to allow logging. For example, to log all warnings, run the following command:

export GST_DEBUG=2

Known issue

In pose detection, the model detects only one person, even if many people are present in the frame.

Image classification using the Inception v3 model trains on the ImageNet data set. As a result, the model can't detect a person because this class isn't included in the data set.

More applications

The release offers various sample applications. To explore more, see the Artificial Intelligence, Camera Software, and Robot Development chapters.